According to researchers and programmers, a benchmarks test was done and it is found that the glob technique is faster than other methods for matching pathnames within directories. The glob's pattern rule follows standard Unix path expansion rules. In Python, the glob module plays a significant role in retrieving files & pathnames that match with the specified pattern passed as its parameter.

Glob in Python:įrom Python 3.5 onwards, programmers can use the Glob() function to find files recursively. Unix, Linux systems, and the shells are some systems that support glob and also render glob() function in system libraries. Glob is a common term used for defining various techniques used for matching established patterns as per the rules mentioned in the Unix shell. In this article, you will learn about the glob() function that helps in finding files recursively through Python code. Regular Expressions or regex play a significant role in recursively finding files through Python code. This can be done using the concept of the regular expression. I'd likely just use find /path/ -name "*.ext" | xargs grep -l, or split it up into two steps - but do them both in a screen/tmux session.Recursively accessing files in your local directory is an important technique that Python programmers need to render in their application for the lookup of a file. Plus, lazy sysadmin is lazy sysadmin (I'm a lazy sysadmin). Now, granted, keep in mind, all of this is assuming you want this as fast as possible, and it's worth the time. So, I think in both filesystem architecture scenarios (structure loaded in memory vs structure stored in directories) it pays off to thread. Plus, it might be beneficial at that point to let the filesystem buffer your reads whenever possible anyway. Granted, once you've got a list of files to look at closer, you're going to be bouncing around already. Instead, read out those directories in the order from the disk, and then do what you want in memory. In this scenario you would likely be hurt by multiple threads - you're bouncing the head back and forth around the directory stuff, scanning stuff, and it's likely just going to hurt you. If this is the case, the entire filesystem does not end up in memory, but sits on disk until it's accessed the first time, and then remains in a cache under certain conditions. However, I suspect that ext4 actually stores the directory contents in the blocks for the directory. If that's so, you could actually benefit from threading - multiple calls against that fs driver would hit locations in RAM. The paths, and how 'the OS knows them' (in the linux world at least) is not really a standard across the filesystem, but specific to the filesystem driver.Įxt4 might load up all the inodes and paths into a live tree in memory, and then keeps that in memory. I thought about using subprocess.call with a bash call with find -exec mv, but i'd have to install Cygwin and would rather not unless that's the only way to make this work well. just trying to get the search part down first.Ĭan anyone help with this? I feel like I'm going the wrong direction here, but not quite sure how to fix it. I'd also prefer to do the work of moving the files to the 'RESTORE_PATH' in the script when it finds them, but i haven't gotten that far yet.

I haven't done any testing with my code yet, but I'm worried that it's just going to be too slow, because from what i can tell it's going to be looping over every directory & file for each iteration of the for loop, which is going to take ages.

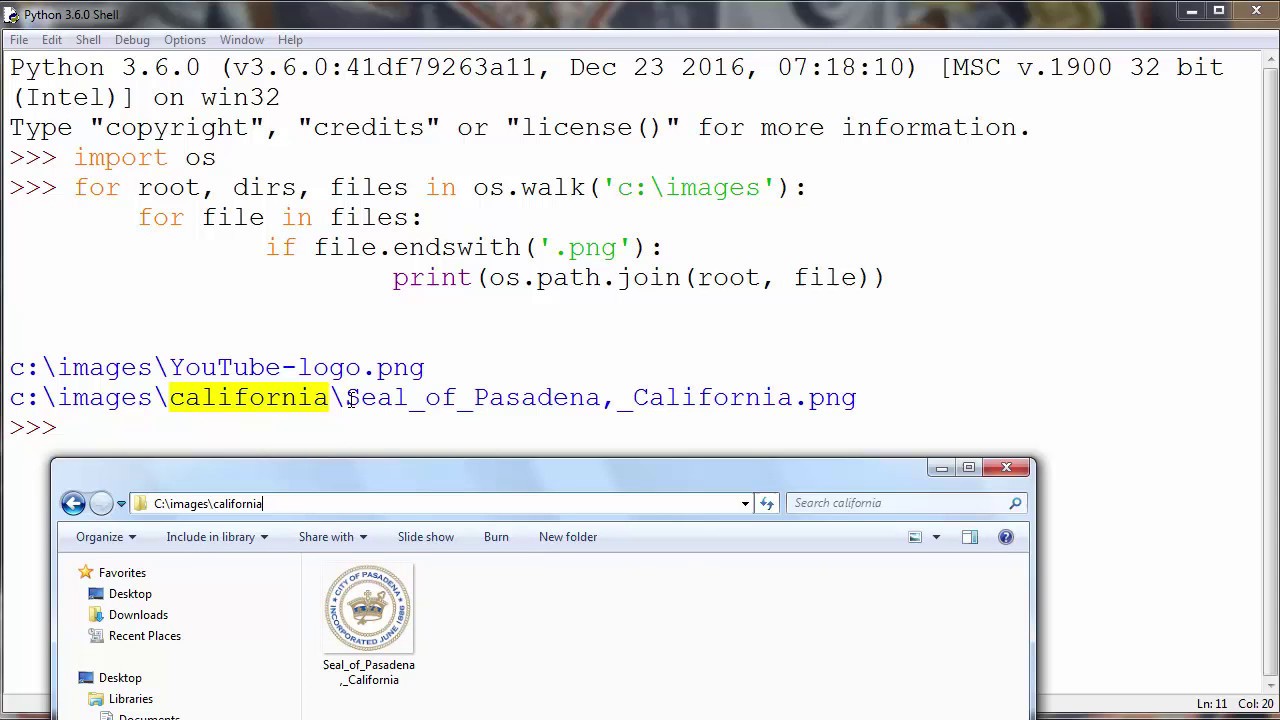

The best/closest thing I could find searching online was writing a nested for loop utilizing os.walk and fnmatch.filter. So with that in mind (slow NTFS file system + millions of files) I'd like to write a solution that's fast (doesn't require looping through all the directories & files multiple times). Across all of the directories there are milliions of files. Inside each of the deepest directories are 10's of thousands of files. They are on a Windows server (NTFS) and each subdirectory is broken up into 2 dirs which is broken up into 16 dirs, each of which are broken up into 16 dirs. The biggest hurdle is just sheer number of files I need to sift through. I have a text file with the list of file names, excluding extensions (which vary) and there could be multiple files with the same base filename from the text file. Ok, I have a problem i'm trying to solve at work that involves searching for a list of files that need to be moved or copied out into a temp holding directory for manual inspection.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed